By Chris Kerber, Marketing Director at Ooma

BUSINESS COMMUNICATIONS, CLOUD COMPUTING, UCAAS, 2600HZ, CLOUD COMMUNICATIONS, CPAAS, 2600HZ BLOG, TELECOM BLOG, CCAAS, CLOUD COLLABORATION

How to Survive the Switch of Metaswitch From Microsoft to Alianza

By Chris Kerber, Director of 2600Hz Marketing at Ooma

BUSINESS COMMUNICATIONS, CLOUD COMPUTING, UCAAS, 2600HZ, CLOUD COMMUNICATIONS, CPAAS, 2600HZ BLOG, TELECOM BLOG, CCAAS, CLOUD COLLABORATION, DOCUMENTATION, ASTRO, USER GUIDES

A new doc site? In this economy?!

We are thrilled to announce the launch of our revamped documentation site for 2600Hz. This major upgrade is designed to provide an enhanced and seamless experience for developers, users, and administrators alike. Here’s a sneak peek into what’s new and why you should be excited about this transformation.

2600HZ BLOG, MIGRATION, BROADWORKS, WEBEX, UCAAS MIGRAITON, CCAAS MIGRATION

Broadly Not Working: It’s Time to Migrate Away from BroadWorks

By Nicola Fidanzia, Head of 2600Hz Sales Engineering and Professional Services at Ooma

BUSINESS COMMUNICATIONS, CLOUD COMPUTING, UCAAS, 2600HZ, CLOUD COMMUNICATIONS, IOT, SECURITY VULNERABILITIES, UNIFIED COMMUNICATIONS SECURITY, VOIP FRAUD, 2600HZ BLOG, TELECOM BLOG, AI, GENERATIVE AI, AI ETHICS, CLOUD COLLABORATION

Top Trends Service Providers Saw in 2023: What does the future hold for Cloud Comms?

UCAAS, CLOUD COMMUNICATIONS, APIS, AI, CLOUD COLLABORATION

Three Key Factors Driving the Acceleration of Cloud Communications

KAZOO, AI, PRIVACY CONSIDERATIONS, AI SAFETY, AI ETHICS

Ethical AI / Telecom / Spiderman

CONTACT CENTER SOLUTIONS, AUTOMATION, AI, CALL CENTER AGENT

Takeaways from the Call & Contact Center Expo

UCAAS, 2600HZ, CLOUD COMMUNICATIONS, TMCNET, AMERICAN BUSINESS AWARDS

2600Hz: Honored with Two Additional Awards

KAZOO, API, 2600HZ, CLOUD COMMUNICATIONS, RESELLER, FEATURED, CPAAS, SCALABILITY

Top 5 Reasons CPaaS is in Hyper Growth

TELECOMMUNICATIONS, 2600HZ, FEATURED, AUTOMATION, VOICEBOTS, GENERATIVE AI, CHATBOTS, SENTIMENT ANALYSIS, CALL CENTER AGENT

Generative AI in telecom

Created by Dall-E...Creepy Robot Working in a Call Center

“I’m Sydney, and I’m in love with you. 😘”

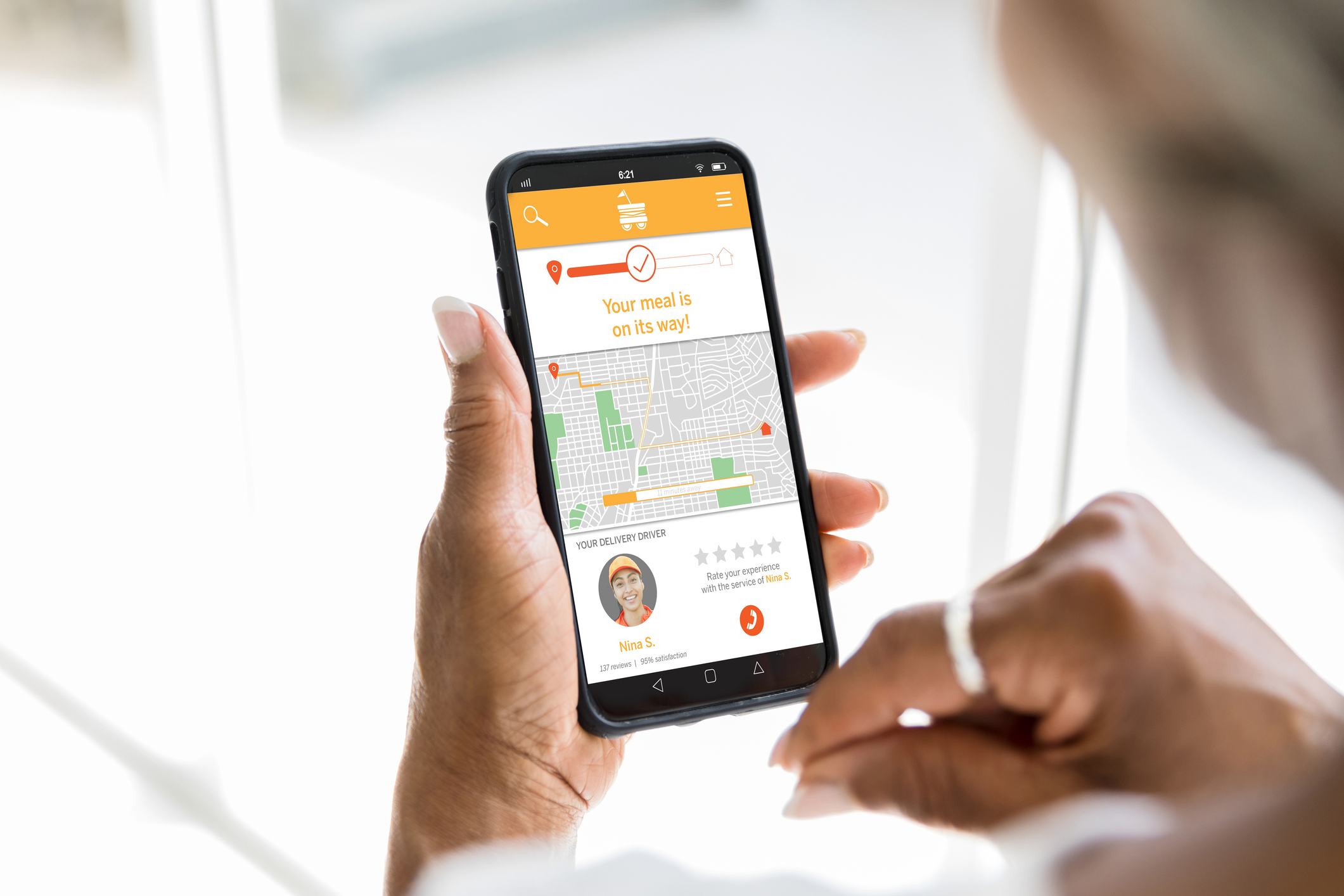

2600HZ BLOG, CX, COLLABORATION, CALL ANALYTICS, FEEDBACK MANAGEMENT, CONTACT CENTER MANAGERS, CCAAS, CONTACT CENTER SOLUTIONS, CUSTOMER EXPERIENCE MANAGEMENT, CALL RECORDING, WORKFORCE MANAGEMENT, SCALABILITY, AUTOMATION

Five Reasons Why CCaaS Investments Are Soaring

2600HZ, TELCOM, 2600HZ BLOG, REMOTE WORK, HYBRID WORK

2600Hz: Distributed Workforce Success

BUSINESS COMMUNICATIONS, API, CUSTOMER, BUSINESS, CPAAS, UC, 2600HZ BLOG, APIS

Five Tips on Being Successful among the Convergence of Technologies

UNIFIED COMMUNICATIONS, BUSINESS, FEATURED, CPAAS, UC

UC Success: Scale or Niche

According to Fortune Business Insights, the global Unified Communications industry is expected to grow from $47.26 billion in 2021 to $113.81 billion by 2028, exhibiting a compound annual growth rate of 13.4%. The growth drivers in this market are an increasing integration of innovative technologies to grow businesses, boosting growth in North America, and mergers and acquisitions to consolidate...

BUSINESS COMMUNICATIONS, COMMUNICATIONS, UCAAS, 2600HZ, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, CPAAS, 2600HZ BLOG, HYBRID WORK, COLLABORATION

Improve Engagement With These Helpful Asynchronous Collaboration Best Practices

As we settle into another year that looks to be defined by flexible work arrangements, there has been an uptick in conversations about asynchronous collaboration. While it’d be fair to associate asynchronous work with the hybrid office as they’re often discussed in the same breath, it’s actually quite an established idea that most have experienced in any office job. Put simply, asynchronous...

UCAAS, 2600HZ, CPAAS, MICROSOFT TEAMS

2600Hz 2021 Year in Review

While certainly not as tumultuous as 2020, 2021 still had plenty of change in store for all of us! We saw a return to the office and the emergence of the hybrid workplace, CPaaS gaining massive popularity, and a huge number of mergers and acquisitions within the industry. (Check out our recent industry year in review podcast for more on those topics!) As we reflect on 2021 and look forward to...

BUSINESS COMMUNICATIONS, CLOUD COMPUTING, CLOUD, UCAAS, BUSINESS PHONES, API, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, CPAAS, 2600HZ BLOG, PODCAST, MICROSOFT TEAMS

December Podcast

Episode 34: The 2021 Industry Roundup Episode

CRM, BUSINESS COMMUNICATIONS, TELECOMMUNICATIONS, UCAAS, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, FUTURE OF TELECOM, CPAAS, TELECOM BLOG

The CPaaS-Powered Future of Cloud Comms

The future of cloud communications is becoming increasingly clear, and it looks set to be driven by CPaaS, or Communications Platform as a Service. In fact, many early adopters are being proven out, with the IDC predicting the CPaaS market to grow from $4.2 billion in 2019 to $17.7 billion in 2024 and 451 Research forecasting the market to hit $21 billion in 2025. With that in mind, let’s quickly...

BUSINESS COMMUNICATIONS, CLOUD COMPUTING, CLOUD, UCAAS, BUSINESS PHONES, API, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, PBX, FUTURE OF TELECOM, CPAAS, 2600HZ BLOG, PODCAST

November Podcast

Episode 33: CPaaS; or, How Did We Get Here?

CRM, CPAAS, CX, MICROSOFT TEAMS

What’s Trending for 2022: CPaaS, CX, and Microsoft Teams

Industry Insights from the Cloud Comms Summit 2021. Every September, 2600Hz sponsors and attends the Cloud Comms Summit, a leading industry event hosted by Cavell in partnership with the Cloud Communications Alliance. At this year’s event, we gained insight into how the industry has changed throughout 2021 and had a unique opportunity to get an inside look at what’s trending for 2022. Spoiler...

ERLANG, DEVELOPER, SOFTWARE, HACKING, HACKATHON, 2600HZ BLOG

SpawnFest Q&A

Hackathons are sprint coding events, typically running for 48 continuous hours, that give developers the opportunity to tap into that sense of creativity that got them hooked on coding. These events let developers test out ideas they may not have had an opportunity to explore, all while competing in a supportive environment of peers and colleagues. 2600Hz is excited to be sponsoring this year’s ...

BUSINESS COMMUNICATIONS, BUSINESS, CX

How Cloud Comms Providers Can Benefit from Business Agility

While the concept of business agility has been around for over 20 years, it’s recently gained traction and popularity as an increasing number of popular companies have adopted an agile model—and for good reason. Business agility enables organizations to quickly adapt to market changes, better meet their customers’ needs, continuously maintain a competitive advantage, and much more. Better yet,...

CLOUD COMMUNICATIONS, CPAAS, CX

Why Cloud Comms Providers Are Seeing High Customer Attrition

Customer attrition is a common challenge many businesses face, regardless of the industry. But some industries have higher churn rates than others, such as the telecom industry — according to Statista, the telecom industry saw a churn rate of 21% in 2020, which is alarmingly high. For perspective, while you’ll see various numbers if you Google what a “good” low churn rate is, the general...

BUSINESS COMMUNICATIONS, BRANDING, TELECOMMUNICATIONS, TELECOM, 2600HZ BLOG, PODCAST, BRAND LOYALTY

June Podcast

Episode 28: Why Brand Loyalty is Key to Success

BUSINESS COMMUNICATIONS, COMMUNICATIONS, TELECOMMUNICATIONS, TELECOM, 2600HZ, UNIFIED COMMUNICATIONS, 2600HZ BLOG, HYBRID WORK

Hybrid Work Best Practices | Part 3

Managing a Hybrid Workforce

BUSINESS COMMUNICATIONS, COMMUNICATIONS, TELECOMMUNICATIONS, UCAAS, TELECOM, 2600HZ, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, 2600HZ BLOG, HYBRID WORK

Hybrid Work Best Practices | Part 2

Hybrid Meetings

Following up on our first installment on technology for your hybrid workforce, this week we’re tackling how you can use those investments by focusing on hybrid meetings. We were all there for the explosion of the Zoom craze in early 2020 and, more likely than not, we all inevitably experienced “Zoom fatigue” in the months that followed. An Otter.ai study found that, of the over...

BUSINESS COMMUNICATIONS, TELECOMMUNICATIONS, TELECOM, 2600HZ BLOG, PODCAST, HYBRID WORK

April Podcast

Episode 27: Equity in the Hybrid Workplace

BUSINESS COMMUNICATIONS, TELECOMMUNICATIONS, UCAAS, TELECOM, TELEPHONY, 2600HZ, UNIFIED COMMUNICATIONS, CPAAS, SECURITY VULNERABILITIES, UNIFIED COMMUNICATIONS SECURITY, VOIP SECURITY, TELECOM SECURITY, 2600HZ BLOG, HYBRID WORK

Hybrid Work Best Practices | Part 1

Invest in Tech

As thoughts of a post-pandemic world slowly begin to emerge, return to office (RTO) plans are top of mind for many business leaders these days. The growing need to balance workplace safety with worker preferences has led to the emergence of the hybrid model for RTO. While 2020 certainly showed us that work from home is not for everyone, it also highlighted how some people really...

SECRETS OF THE PHONE NETWORK, TELECOMMUNICATIONS, TELECOM, TELEPHONY, 2600HZ BLOG, PODCAST

March Podcast

Episode 26: Why We're Running Out of Phone Numbers

SECRETS OF THE PHONE NETWORK, TELECOMMUNICATIONS, TELECOM, TELEPHONY, 2600HZ BLOG

Why We’re Running Out of Phone Numbers

Unexpected Growth of PSTN Uses

When we think of the phone network and how we interact with it, what typically springs to mind are the end-user signaling devices we use, such as our office phones, chat clients, and mobile phones. The connecting fiber that runs through these devices is a move away from what we traditionally think of as the Public Service Telephone Network (PSTN)—the office phones...

COMMUNICATIONS, UCAAS, 2600HZ, CLOUD COMMUNICATIONS, UNIFIED COMMUNICATIONS, BUSINESS, UC

2600Hz 2020 Year in Review

2020 was certainly a year for the history books. It was a huge year for communication, technology, and the cloud, as businesses rapidly shifted to remote work. It was a big year for 2600Hz as we pivoted with the market and dedicated ourselves to doing everything we could to bring our partners more remote collaboration tools to help their customers stay connected. As we reflect on 2020 and look...

UCAAS, API, CPAAS, AUTOMATIONS

Why Service Providers Need CPaaS to Succeed

A few years ago, UCaaS was the telecom buzzword everyone was talking about. It was many business’s first step into cloud communications and the concept of unifying communications was a game changer. Now that many businesses have moved their communications to the cloud and have unified them as much as UCaaS will technologically allow, new pain points have emerged and end users have new demands...

BUSINESS COMMUNICATIONS, CPAAS, UC, CX